Enterprise technology landscapes shift with increasing velocity. Capital allocation decisions made today must withstand market volatility, regulatory changes, and technological obsolescence for years to come. The challenge for leadership lies not in predicting the next breakthrough, but in constructing systems flexible enough to adapt when breakthroughs occur. This guide explores architectural patterns that provide resilience and scalability, ensuring technology investments deliver value over extended horizons. We focus on structural principles rather than fleeting tools, aiming to build a foundation capable of supporting long-term growth.

Understanding the Landscape of Emerging Tech 🌐

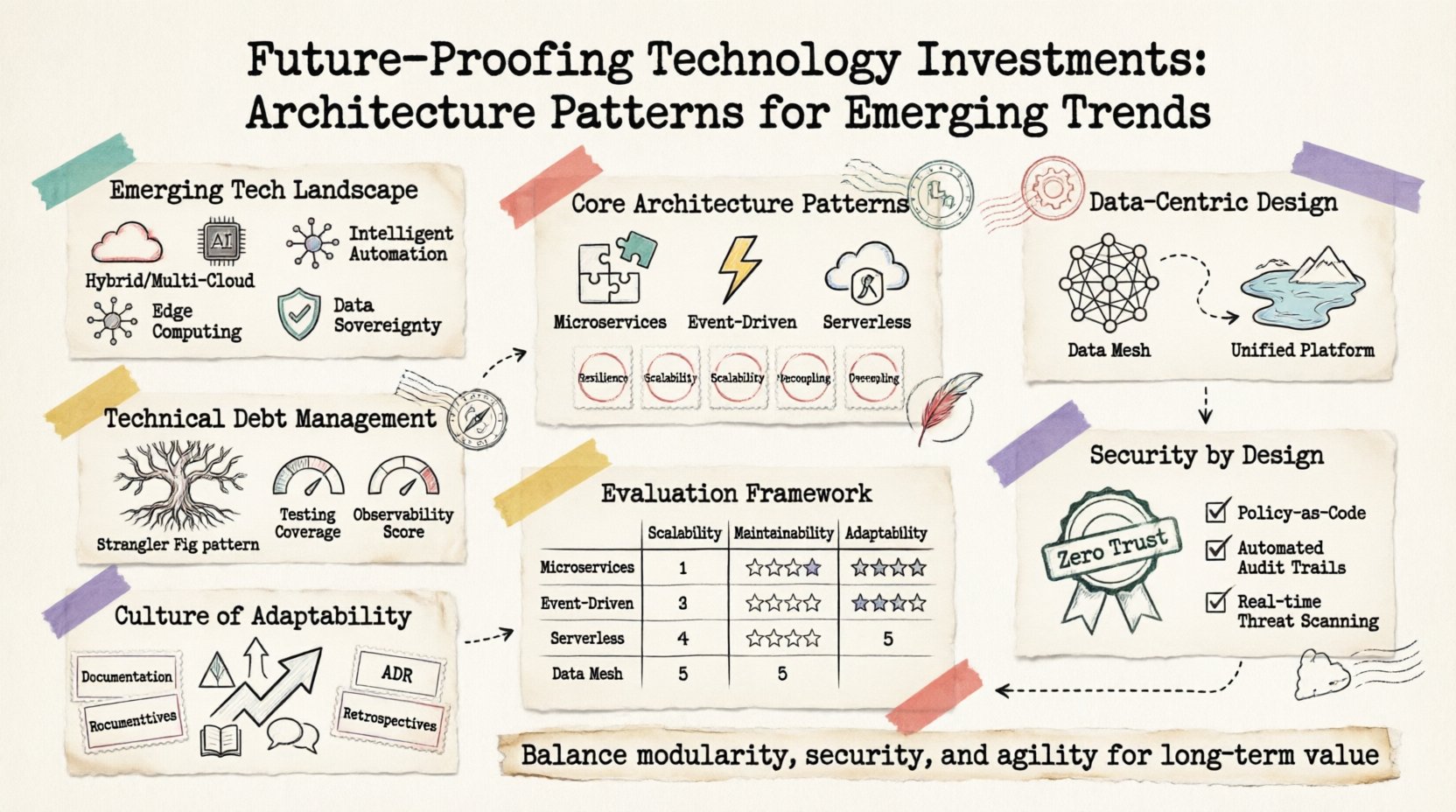

Before selecting a pattern, one must understand the forces driving change. The current environment is defined by distributed complexity, data sovereignty, and the need for real-time responsiveness. Legacy monolithic structures often struggle to accommodate these demands without significant rework. The following trends shape the architectural requirements for modern enterprises:

- Hybrid and Multi-Cloud Environments: Infrastructure is no longer siloed. Applications run across on-premises facilities, private clouds, and multiple public providers simultaneously.

- Intelligent Automation: Artificial intelligence and machine learning are moving from experimental phases to core operational functions.

- Edge Computing: Processing is shifting closer to data sources to reduce latency and bandwidth costs.

- Data Sovereignty and Privacy: Regulations require granular control over where data resides and how it is processed.

Ignoring these trends risks creating islands of technology that cannot communicate or share resources efficiently. Future-proofing requires a shift from product-centric thinking to capability-centric thinking. You must build systems that expose capabilities rather than hard-coded features.

Core Architecture Patterns for Resilience 🛡️

Resilience is the ability of a system to recover from failure while maintaining service continuity. Several patterns have emerged as standards for achieving this in distributed environments.

1. Microservices and Loose Coupling

Decomposing a large application into smaller, independent services allows teams to develop, deploy, and scale components without affecting the entire ecosystem. This isolation is critical for long-term viability.

- Independent Deployment: A change in one service does not require a full regression test of the entire application.

- Technology Heterogeneity: Different services can utilize the most appropriate language or database for their specific function.

- Failure Isolation: If one service fails, the rest of the system can continue operating, potentially with degraded functionality.

However, this approach introduces complexity. Network latency, service discovery, and data consistency become significant concerns. To mitigate these risks, strict governance around service boundaries and API contracts is necessary.

2. Event-Driven Architecture (EDA)

In an EDA model, components communicate through the production and consumption of events. This decouples the sender from the receiver, allowing systems to react to state changes asynchronously.

- Scalability: Consumers can scale independently based on the volume of events received.

- Resilience: If a consumer is offline, events can be queued and processed once the system recovers.

- Extensibility: New services can be added to listen to existing events without modifying the producers.

This pattern supports the need for real-time data processing. It enables the system to react to user actions, sensor data, or transactional updates immediately, rather than waiting for batch processes.

3. Serverless and Function-as-a-Service

Abstracting infrastructure management allows developers to focus on logic. Resources are allocated dynamically based on demand, eliminating idle capacity costs.

- Cost Efficiency: You pay only for execution time, not for provisioned servers sitting idle.

- Automatic Scaling: The infrastructure scales up during peaks and scales down during troughs automatically.

- Reduced Overhead: No patching, maintenance, or capacity planning for the underlying runtime environment.

The trade-off includes potential cold start latency and vendor lock-in risks. It is best suited for sporadic workloads or specific microservices rather than persistent, high-throughput transactional systems.

Data-Centric Design Strategies 💾

Data is the most valuable asset in modern enterprise architecture. How data is structured, governed, and accessed determines the speed of innovation. Traditional centralized data warehouses often become bottlenecks.

Data Mesh Principles

Data mesh treats data as a product. It decentralizes data ownership to the domain teams that generate the data, rather than a central platform team.

- Domain Ownership: Teams are responsible for the quality, accessibility, and documentation of their data.

- Self-Serve Infrastructure: A platform provides the tools for teams to manage their data products without manual intervention.

- Federated Governance: Global policies are enforced locally, ensuring compliance without stifling autonomy.

- Computational Decoupling: Data is stored and processed in the most optimal location for its specific use case.

This approach reduces the burden on central IT teams and accelerates data availability for analytics and AI initiatives. It requires a cultural shift towards treating data as a service with defined service level agreements.

Unified Data Platforms

While mesh promotes distribution, a unified platform ensures discoverability. A data lakehouse architecture combines the flexibility of data lakes with the management features of data warehouses.

- Single Source of Truth: Analysts and engineers access consistent data structures.

- ACID Compliance: Ensures data integrity during complex transactions.

- Performance Optimization: Indexing and partitioning strategies are managed centrally for query speed.

Managing Technical Debt in Evolution 📉

Every system accumulates technical debt over time. Ignoring it leads to stagnation, while aggressive refactoring risks instability. A balanced approach is required to maintain investment value.

Incremental Modernization

Instead of a “big bang” rewrite, adopt a strangler fig pattern. Gradually replace functionality of a legacy system with new microservices. This allows for continuous delivery while reducing risk.

- Risk Mitigation: If the new service fails, the legacy system remains active.

- Feedback Loops: Real-world usage informs the development of the new components.

- Resource Allocation: Teams can work on modernization without halting business operations.

Automated Testing and Observability

Debt is manageable only when visibility exists. Comprehensive logging, tracing, and monitoring allow teams to identify performance degradation early.

- End-to-End Tracing: Follow requests across multiple services to identify bottlenecks.

- Automated Regression: Prevent new code from breaking existing functionality.

- Health Checks: Automated verification of system components ensures readiness.

Security and Compliance by Design 🔒

Security cannot be an afterthought. It must be embedded into the architecture from the initial design phase. The traditional perimeter model is insufficient for distributed systems.

Zero Trust Architecture

Never trust, always verify. Every access request must be authenticated and authorized, regardless of location.

- Identity-Centric: Access is granted based on user identity and context, not network location.

- Least Privilege: Users and services receive only the minimum permissions required.

- Micro-Segmentation: Network traffic is restricted to specific flows, limiting lateral movement.

Compliance Automation

Regulatory requirements change frequently. Code-based compliance checks ensure that architecture adheres to standards automatically.

- Infrastructure as Code: Deployments are version-controlled and auditable.

- Policy as Code: Security rules are enforced by the deployment pipeline.

- Continuous Auditing: Real-time monitoring detects configuration drift.

Evaluation Framework for Investments 📊

How do you decide which pattern fits your organization? A structured evaluation framework helps align technology choices with business goals.

| Pattern | Best Use Case | Complexity | Scalability |

|---|---|---|---|

| Monolithic | Simple applications, small teams | Low | Vertical |

| Microservices | Complex domains, large teams | High | Horizontal |

| Event-Driven | Real-time data, asynchronous tasks | Medium | High |

| Serverless | Variable workloads, sporadic usage | Medium | High |

When evaluating options, consider the following metrics:

- Time to Market: How quickly can new features be delivered?

- Total Cost of Ownership: Include infrastructure, maintenance, and personnel costs.

- Operational Overhead: How much effort is required to keep the system running?

- Vendor Risk: What is the impact if a provider changes terms or shuts down?

Building a Culture of Adaptability 🔄

Architecture is only as strong as the people who maintain it. Investing in technology requires investing in the workforce. Continuous learning and knowledge sharing prevent bottlenecks where only one person understands a critical system.

- Documentation: Architecture decision records (ADRs) capture the reasoning behind choices.

- Review Cycles: Regular architecture reviews ensure patterns remain aligned with goals.

- Experimentation: Allow time for prototyping new technologies in a safe environment.

By fostering a culture that values transparency and continuous improvement, organizations can navigate technological shifts with confidence. The goal is not to eliminate change, but to build systems that embrace it.

Final Thoughts on Strategic Alignment 🎯

Future-proofing is a continuous process, not a one-time project. It requires constant vigilance and a willingness to evolve. By adopting robust architectural patterns, prioritizing data governance, and embedding security into the design, enterprises can secure their technology investments for the long term. The focus remains on creating value, maintaining agility, and ensuring that the technology serves the business, not the other way around.

Remember that the most resilient systems are those designed with simplicity and modularity in mind. Avoid over-engineering, but do not compromise on the fundamentals of reliability and security. Balance is key to sustainable growth in a dynamic digital economy.