In the modern enterprise, data is not merely a byproduct of operations; it is the foundational asset that drives decision-making, innovation, and regulatory adherence. However, without a structured approach, this asset becomes a liability. A robust Data Governance Architecture Framework provides the necessary structure to manage data effectively across the entire organization. This guide outlines the essential components required to build a resilient framework that prioritizes data quality, security, and compliance within the context of Enterprise Architecture.

🔍 Defining the Data Governance Architecture

Data Governance Architecture is the blueprint that defines how data assets are managed throughout their lifecycle. It integrates people, processes, and policies into a cohesive system that supports business goals while mitigating risk. Unlike ad-hoc governance efforts, an architectural approach ensures scalability and consistency.

Key objectives of this framework include:

- Establishing clear ownership and accountability for data.

- Defining standards for data quality and integrity.

- Ensuring alignment with regulatory requirements such as GDPR, CCPA, and industry-specific mandates.

- Facilitating secure data sharing and accessibility for authorized users.

- Supporting strategic decision-making through reliable information.

Without this architecture, organizations often face siloed data, inconsistent definitions, and increased exposure to security breaches. The framework acts as the control plane for all data-related activities.

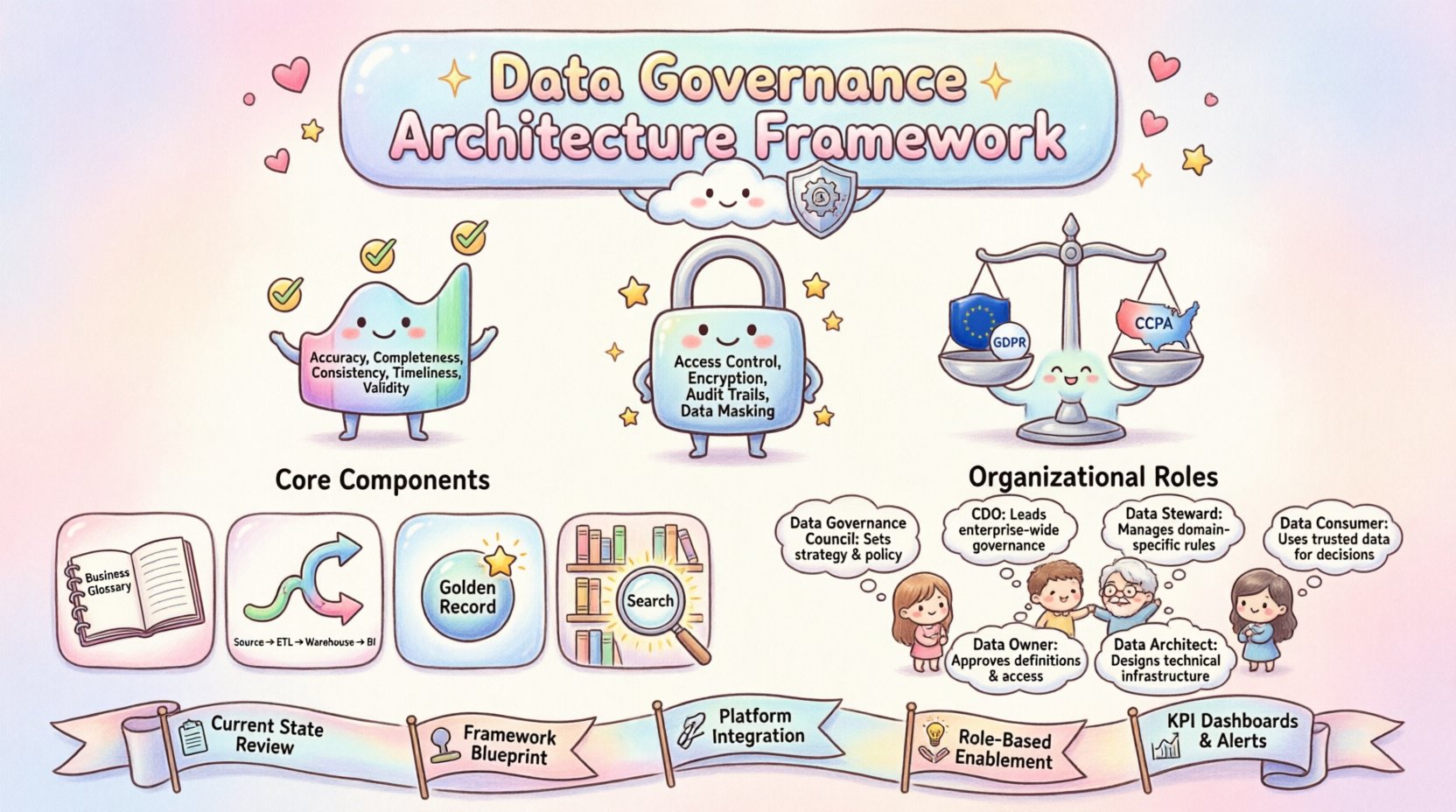

🛡️ The Three Core Pillars

A successful architecture rests on three non-negotiable pillars. Each pillar must be addressed simultaneously to avoid gaps that could compromise the integrity of the enterprise.

1. Data Quality 📊

Quality is the measure of data fitness for its intended use. Poor data quality leads to erroneous insights, operational inefficiencies, and loss of trust. The framework must define metrics for quality, including:

- Accuracy: Does the data correctly represent the real-world entity?

- Completeness: Are all required fields populated?

- Consistency: Is the data uniform across different systems?

- Timeliness: Is the data available when needed?

- Validity: Does the data conform to defined formats and ranges?

Implementing quality controls requires automated validation rules and manual review processes. Data profiling is essential to identify anomalies before they propagate through downstream systems.

2. Data Security 🔒

Security ensures that data is protected from unauthorized access, alteration, or destruction. In an architecture context, security is not just an add-on feature but a design principle. Key considerations include:

- Access Control: Implementing Role-Based Access Control (RBAC) to ensure users only access data necessary for their roles.

- Encryption: Protecting data at rest and in transit using industry-standard cryptographic methods.

- Audit Trails: Maintaining logs of who accessed what data and when to support forensic analysis.

- Data Masking: Hiding sensitive information in non-production environments to prevent leaks.

Security policies must be enforced automatically where possible to reduce human error and ensure compliance.

3. Compliance ⚖️

Compliance involves adhering to legal and regulatory standards. As regulations evolve, the architecture must be flexible enough to adapt. This includes:

- Identifying which data elements are subject to regulation (e.g., PII, PHI).

- Defining retention and disposal policies.

- Ensuring data sovereignty requirements are met for cross-border transfers.

- Managing consent preferences for marketing and operational communications.

A compliance-focused framework reduces legal risk and enhances the organization’s reputation among stakeholders.

🧱 Core Components of the Framework

To operationalize the pillars above, the architecture must include specific functional components. These components work together to manage the data lifecycle from creation to archival.

1. Metadata Management 📝

Metadata is data about data. It provides context and meaning to raw information. A centralized metadata repository allows users to understand the lineage, definition, and usage of data assets. This component supports:

- Business Glossary: A dictionary of common terms and definitions.

- Technical Metadata: System schemas, data types, and storage locations.

- Operational Metadata: Information about data processing jobs and execution logs.

2. Data Lineage & Impact Analysis 🔄

Understanding where data comes from and where it goes is critical for trust and troubleshooting. Data lineage maps the flow of data across systems, transformations, and processes. This capability enables:

- Root cause analysis when data quality issues arise.

- Impact assessment before changing a data structure.

- Transparency for auditors and regulators.

3. Master Data Management (MDM) 🌐

MDM ensures that critical business entities (like customers, products, or employees) have a single, authoritative version of the truth. This reduces duplication and ensures consistency across the enterprise.

- Define golden records for key entities.

- Establish rules for merging and matching records.

- Manage identity resolution across disparate sources.

4. Data Catalog 📚

A data catalog serves as a searchable inventory of all data assets. It empowers users to discover and understand data without needing deep technical knowledge. Features include:

- Search functionality based on tags and keywords.

- Rating and commenting systems for community feedback.

- Integration with BI and analytics tools.

👥 Organizational Structure and Roles

Technology alone cannot govern data. A clear organizational structure defines who is responsible for what. The following table outlines key roles and their responsibilities within the framework.

| Role | Primary Responsibility | Key Output |

|---|---|---|

| Data Governance Council | Strategic oversight and policy approval | Governance Charter, Strategic Roadmap |

| Chief Data Officer (CDO) | Overall accountability for data strategy | Data Vision, Investment Prioritization |

| Data Steward | Daily management of data quality and definitions | Data Definitions, Quality Reports |

| Data Owner | Accountability for specific data domains | Access Approvals, Risk Decisions |

| Data Architect | Designing the technical implementation | Integration Patterns, Security Standards |

| Data Consumer | Using data for business value | Feedback on Quality, Usage Patterns |

Clarity in these roles prevents ambiguity. For example, a Data Owner approves access policies, while a Data Steward ensures the data is accurate. This separation of duties is vital for effective control.

🚀 Implementation Lifecycle

Building a framework is a multi-stage process. Rushing this implementation often leads to resistance and failure. A phased approach allows for iterative improvement and stakeholder buy-in.

Phase 1: Assessment & Strategy 📋

Begin by evaluating the current state of data management. Identify pain points, regulatory gaps, and existing capabilities. Define the target state and the gap between the two. This phase sets the scope and secures executive sponsorship.

Phase 2: Design & Standards 🏗️

Develop the policies, standards, and processes. Define the data taxonomy and classification schema. Establish the architecture for metadata and lineage tracking. Ensure these standards are documented and accessible.

Phase 3: Tooling & Integration 🔗

Select and deploy the necessary tools to support the framework. This includes platforms for cataloging, security, and quality monitoring. Ensure these tools integrate seamlessly with existing data pipelines and storage systems. Avoid creating new silos during this step.

Phase 4: Training & Adoption 🎓

Technology fails without people. Conduct training sessions for Data Stewards and business users. Create communication campaigns to highlight the benefits of better data. Encourage a culture where data quality is everyone’s responsibility.

Phase 5: Monitoring & Optimization 📈

Once operational, continuously monitor the framework. Track key performance indicators to measure success. Gather feedback from users to refine processes. Regularly review policies to ensure they remain relevant as business needs change.

📊 Metrics and KPIs

To demonstrate the value of the framework, you must measure its performance. Use the following metrics to track progress and identify areas for improvement.

- Data Quality Score: Percentage of records meeting quality thresholds.

- Issue Resolution Time: Average time taken to fix data quality incidents.

- Coverage Rate: Percentage of critical data assets covered by governance policies.

- Access Request Turnaround: Time taken to process access approvals.

- Compliance Audit Pass Rate: Percentage of audits passed without major findings.

- Data Asset Utilization: Number of active users consuming specific datasets.

Regular reporting on these metrics keeps stakeholders informed and accountable.

⚠️ Common Challenges and Mitigation

Implementing a data governance architecture is complex. Recognizing potential pitfalls early can save significant time and resources.

Challenge 1: Resistance to Change 🛑

Users may view governance as bureaucracy that slows down work. Mitigation: Focus on enabling self-service capabilities that speed up access while maintaining control. Show quick wins to demonstrate value.

Challenge 2: Lack of Executive Support 📉

Without top-level backing, initiatives often stall. Mitigation: Align governance goals with business outcomes such as revenue growth or risk reduction. Speak the language of the business, not just IT.

Challenge 3: Siloed Data Sources 🏝️

Data often lives in disconnected systems. Mitigation: Prioritize high-value integration points first. Use abstraction layers to unify access without necessarily moving all data physically.

Challenge 4: Evolving Regulations 📜

Compliance requirements change frequently. Mitigation: Build flexibility into the policy engine. Regularly review the regulatory landscape and update definitions accordingly.

🔮 Future Trends in Data Governance

The landscape of data management is evolving. Staying ahead requires awareness of emerging trends that will shape the future of the framework.

- Automated Governance: Using AI to detect anomalies and enforce policies automatically without manual intervention.

- Data Mesh: A decentralized approach to architecture that treats data as a product, empowering domain teams to manage their own governance.

- Privacy-Preserving Computation: Techniques that allow data analysis without exposing raw sensitive information.

- Real-Time Governance: Moving from batch-based checks to continuous monitoring of data streams.

🔗 Integrating with Enterprise Architecture

Data Governance does not exist in a vacuum. It must align with the broader Enterprise Architecture. This ensures that data initiatives support the overall IT strategy.

- Application Architecture: Ensure new applications comply with data standards at the design stage.

- Infrastructure Architecture: Plan storage and compute resources with security and governance requirements in mind.

- Business Architecture: Map data flows to business processes to identify critical data needs.

This alignment prevents fragmentation and ensures that data governance is a strategic enabler rather than a technical constraint.

🛠️ Establishing a Data Culture

The most robust technical framework will fail if the organizational culture does not support it. A data-driven culture values evidence over intuition.

- Leadership Example: Leaders must use data in their own decision-making processes.

- Recognition: Reward teams that improve data quality or identify governance issues.

- Communication: Share success stories and lessons learned regularly.

- Education: Provide ongoing training to improve data literacy across the organization.

Culture shifts take time. Patience and consistency are key to embedding these values into the daily workflow.

📝 Summary of Best Practices

To ensure long-term success, adhere to these core principles when designing and operating your framework.

- Start Small: Begin with a pilot domain to prove value before expanding.

- Keep it Simple: Avoid overly complex policies that are difficult to enforce.

- Automate Where Possible: Reduce manual effort to minimize errors and fatigue.

- Collaborate: Involve business units in the design process to ensure relevance.

- Iterate: Treat the framework as a living system that evolves with the business.

By following these guidelines, organizations can build a framework that not only protects their assets but also unlocks their potential for growth and innovation.