In the modern enterprise, technology evolves faster than traditional procurement cycles can accommodate. Leaders face a constant influx of new tools, platforms, and methodologies. Without a structured approach, this influx can lead to shadow IT, fragmented architectures, and wasted investment. A robust technology scouting framework provides the necessary discipline to identify, assess, and integrate emerging solutions while maintaining alignment with enterprise architecture goals. This guide outlines the essential components of such a framework, ensuring innovation drives value without compromising stability. 🏗️

Why a Formal Scouting Framework Matters 🤔

Enterprise architecture (EA) is not merely about documenting current systems; it is about steering the organization toward a future state. When teams adopt technology in isolation, technical debt accumulates rapidly. A formal scouting process mitigates this risk by introducing checks and balances.

Key benefits include:

- Strategic Alignment: Ensures new tools support business objectives rather than diverting resources.

- Risk Mitigation: Identifies security, compliance, and operational risks before full-scale deployment.

- Cost Efficiency: Prevents duplicate investments and redundant licensing fees.

- Scalability: Verifies that solutions can grow alongside the organization.

- Interoperability: Confirms new systems can communicate effectively with legacy infrastructure.

Without this framework, organizations often fall into the trap of “shiny object syndrome,” where attention is drawn to the latest trend without verifying its practical utility. The goal is not to resist change, but to manage it with intention.

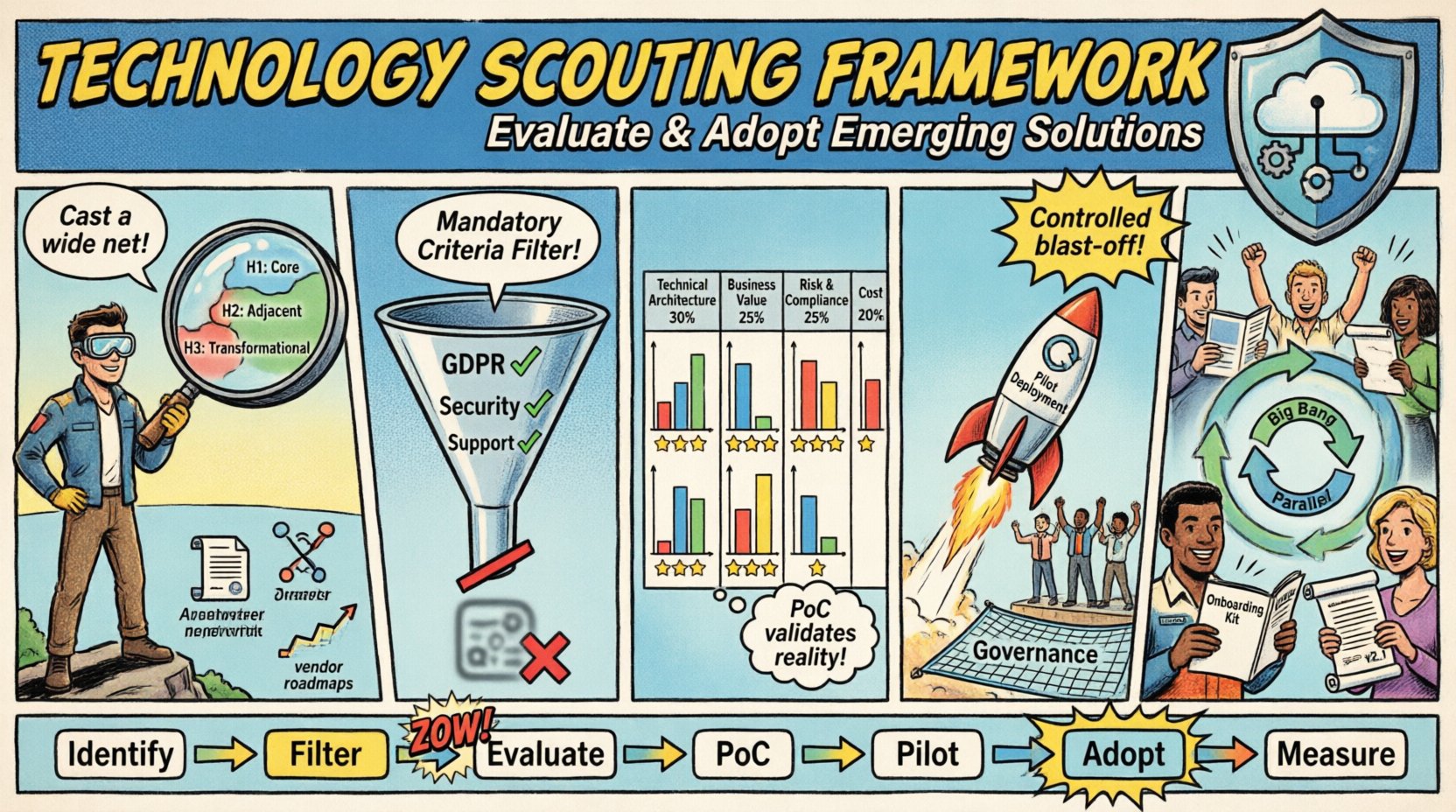

Phase 1: Discovery and Identification 🔍

The first step in the technology scouting framework is identifying potential candidates. This phase is about casting a wide net while maintaining focus on the organization’s strategic priorities.

1.1 Define Innovation Horizons

Not all technologies serve the same purpose. Categorize potential solutions based on their timeline and impact:

- Horizon 1 (Core): Improvements to existing systems. Focus on efficiency and cost reduction.

- Horizon 2 (Adjacent): Expansions into new markets or capabilities. Focus on growth and integration.

- Horizon 3 (Transformational): Radical shifts in how business operates. Focus on disruption and future readiness.

By categorizing opportunities, architects can allocate resources appropriately. Horizon 1 initiatives require rigorous stability testing, while Horizon 3 projects may tolerate higher risk for greater potential reward.

1.2 Sources of Intelligence

Effective scouting relies on diverse information streams. Relying on a single source creates blind spots. Organizations should monitor:

- Industry Analyst Reports: Third-party assessments of market trends and vendor maturity.

- Peer Networks: Conversations with other organizations facing similar challenges.

- Community Forums: Technical discussions regarding implementation nuances and common pitfalls.

- Internal Feedback: Input from development teams and end-users who encounter limitations in current tools.

- Vendor Roadmaps: Understanding the direction in which technology providers are steering their products.

Establishing a dedicated team or committee to gather this intelligence ensures consistency. This group acts as the central hub for all scouting activities, preventing fragmented efforts across departments.

Phase 2: Initial Assessment and Filtering 🧹

Once potential solutions are identified, they must be filtered against baseline requirements. This stage prevents deep investment in technologies that do not fit the environment.

2.1 Mandatory Criteria Checklist

Before conducting a detailed analysis, apply a pass/fail filter based on non-negotiable constraints:

- Compliance: Does the solution meet data privacy regulations (e.g., GDPR, HIPAA)?

- Security: Are security standards (e.g., encryption, MFA) met or exceeded?

- Support: Is there a viable support model available for enterprise-scale issues?

- License Model: Does the pricing structure align with financial planning and budgeting cycles?

- Exit Strategy: Can data be exported if the relationship ends?

If a solution fails any mandatory criterion, it is disqualified immediately. This saves time and resources that would otherwise be spent on a deep dive.

2.2 Fit Gap Analysis

For solutions passing the mandatory filter, perform a high-level fit gap analysis. Compare the capabilities of the new solution against the current architectural standards.

- Integration Points: How will this connect to the existing API ecosystem?

- Data Model: Does the data schema align with master data management strategies?

- Authentication: Can it integrate with the identity provider?

- Infrastructure: Does it run on-premise, in a specific cloud, or as a SaaS?

This analysis highlights where customization might be required. Significant customization often indicates a poor fit, as it increases maintenance overhead and upgrade complexity.

Phase 3: Deep Evaluation and Scoring 📊

Solutions that pass the initial filter enter the deep evaluation phase. Here, quantitative and qualitative metrics are applied to determine relative value.

3.1 The Evaluation Matrix

Use a weighted scoring model to compare finalists objectively. Assign weights based on organizational priorities. A solution that is cheaper but less secure may score lower than a slightly more expensive, highly secure alternative.

| Category | Weight | Criteria | Score (1-5) |

|---|---|---|---|

| Technical Architecture | 30% | Scalability, API design, Modularity | |

| Business Value | 25% | ROI, Time to Value, Feature Completeness | |

| Risk & Compliance | 25% | Security posture, Regulatory adherence, Vendor stability | |

| Cost of Ownership | 20% | Licensing, Implementation, Maintenance, Training |

Note: The weights above are examples. Adjust them based on specific project needs. For a financial institution, Risk & Compliance weight should be significantly higher. For a startup, Time to Value might carry more weight.

3.2 Proof of Concept (PoC)

Numbers on a spreadsheet do not tell the whole story. A Proof of Concept validates the solution in a real-world environment.

- Scope Limitation: Define a clear, limited scope for the PoC. It should not be a full implementation.

- Success Criteria: Establish specific metrics for success (e.g., “Reduce latency by 20%”, “Enable 50 concurrent users”).

- Duration: Keep it short (e.g., 2-4 weeks) to maintain momentum.

- Team: Include both technical staff and business stakeholders to get diverse feedback.

During the PoC, document friction points. If the user experience is confusing or the documentation is sparse, this is a red flag. Technical capability does not guarantee usability.

Phase 4: Selection and Pilot Deployment 🚀

Once the best option is selected, move to a controlled pilot deployment. This bridges the gap between evaluation and full adoption.

4.1 Pilot Scope Definition

Select a non-critical business unit or a specific subset of data for the pilot. This minimizes risk if the solution fails. The pilot should mimic production conditions as closely as possible without affecting critical operations.

- User Group: Choose a group of power users who can provide detailed feedback.

- Timeline: Set a start and end date. Pilots often drag on without deadlines.

- Support Channel: Establish a dedicated channel for pilot issues to ensure rapid resolution.

4.2 Integration with Governance

Even during the pilot, governance processes must be followed. Security reviews, change management tickets, and architectural sign-offs should not be skipped. This ensures that when the solution moves to production, it is already compliant.

Phase 5: Full Adoption and Integration 🔄

Successful pilots lead to full adoption. This phase focuses on migration, training, and long-term support.

5.1 Migration Strategy

Plan the transition from old to new carefully. Common strategies include:

- Big Bang: Switch over completely on a specific date. High risk, high reward.

- Phased Rollout: Deploy by region, department, or user group. Lower risk, slower timeline.

- Parallel Run: Run both systems simultaneously for a period. Ensures data accuracy but doubles workload.

Choose the strategy based on the criticality of the system and the tolerance for disruption.

5.2 Knowledge Transfer

Technology is only as good as the people using it. Invest in training and documentation.

- Internal Documentation: Create architecture diagrams and integration guides.

- User Manuals: Develop role-based guides for end-users.

- Training Sessions: Host workshops to demonstrate new workflows.

- Support Playbooks: Equip helpdesk teams with troubleshooting steps.

Failure to transfer knowledge often leads to shadow IT, where users bypass the new system because they do not understand it.

Governance and Stakeholder Management 👥

Throughout the entire framework, governance ensures accountability. Clear roles prevent confusion and decision paralysis.

6.1 Roles and Responsibilities

| Role | Responsibility |

|---|---|

| Enterprise Architect | Ensures alignment with long-term strategy and standards. |

| Security Officer | Validates security posture and compliance requirements. |

| Business Sponsor | Defines business value and approves budget. |

| Technical Lead | Oversees implementation and technical feasibility. |

| Procurement | Manages contracts, licensing, and vendor relationships. |

6.2 Change Management

Introducing new technology changes how people work. Resistance is natural. Address this through transparent communication.

- Explain the Why: Clearly articulate why the change is happening.

- Highlight Benefits: Show how the change makes individual jobs easier.

- Listen to Concerns: Create feedback loops to address fears and issues.

- Celebrate Wins: Acknowledge early adopters and successes.

Pitfalls to Avoid ⚠️

Even with a framework, organizations can stumble. Awareness of common pitfalls helps navigate them.

- Ignoring Total Cost of Ownership: Focusing only on license fees ignores implementation, training, and maintenance costs.

- Vendor Lock-in: Choosing solutions that make it difficult to switch providers in the future.

- Skipping Security Reviews: Rushing deployment without proper security assessment.

- Over-Engineering: Trying to make the solution fit every edge case rather than the core use case.

- Disregarding User Experience: A powerful tool is useless if users find it frustrating.

Measuring Success 📈

After adoption, the framework must validate that the investment yielded results. Define Key Performance Indicators (KPIs) early.

- Adoption Rate: Percentage of target users actively using the system.

- Performance Metrics: Latency, uptime, and throughput compared to baselines.

- Cost Savings: Reduction in licensing or operational expenses.

- Incident Reduction: Fewer bugs or support tickets related to the old system.

- Time to Market: Speed of delivering new features or capabilities.

Regular reviews (quarterly or bi-annually) ensure the technology continues to meet needs. If a solution no longer aligns with business goals, the framework should allow for deprecation. Technology is not static; it must evolve or be retired.

Continuous Improvement 🔄

The technology scouting framework is not a one-time project. It is a living process that evolves with the organization.

- Review Criteria: Update evaluation metrics as security standards or business goals shift.

- Update Vendors: Regularly re-evaluate current vendors against the market.

- Feedback Loops: Incorporate lessons learned from past projects into future scouting.

- Training: Keep the scouting team updated on emerging technologies.

By treating the framework as a continuous improvement cycle, the organization maintains agility. This approach ensures that technology remains an enabler rather than a constraint.

Summary of Framework Steps 📝

- Identify: Gather opportunities aligned with strategy.

- Filter: Apply mandatory compliance and security checks.

- Evaluate: Score solutions using a weighted matrix.

- PoC: Test in a limited environment.

- Pilot: Deploy to a small group with support.

- Adopt: Full rollout with training and migration.

- Measure: Track KPIs and iterate.

Implementing this structure brings order to chaos. It allows enterprise architects to make decisions based on data and strategy rather than hype. The result is a resilient, adaptable, and value-driven technology landscape. 🏁